Enterprise AI adoption has accelerated dramatically over the past two years. What began as isolated experiments has evolved into a sprawling ecosystem of tools, models, frameworks and platforms - each promising productivity, efficiency or competitive advantage. Yet beneath the excitement, something more subtle and far more consequential is unfolding. Enterprises are not building a single, coherent AI capability. They are unintentionally creating dozens of disconnected ones.

In one part of the organisation, a team is experimenting with OpenAI. Elsewhere, another group is working with HuggingFace models. A third team is building a LangChain prototype, while a fourth is developing a Vertex AI workflow. Somewhere in the background, a fine‑tuned Llama model is running on a GPU cluster that no one has documented for months. Meanwhile, SaaS tools with embedded AI features quietly store sensitive data in external clouds.

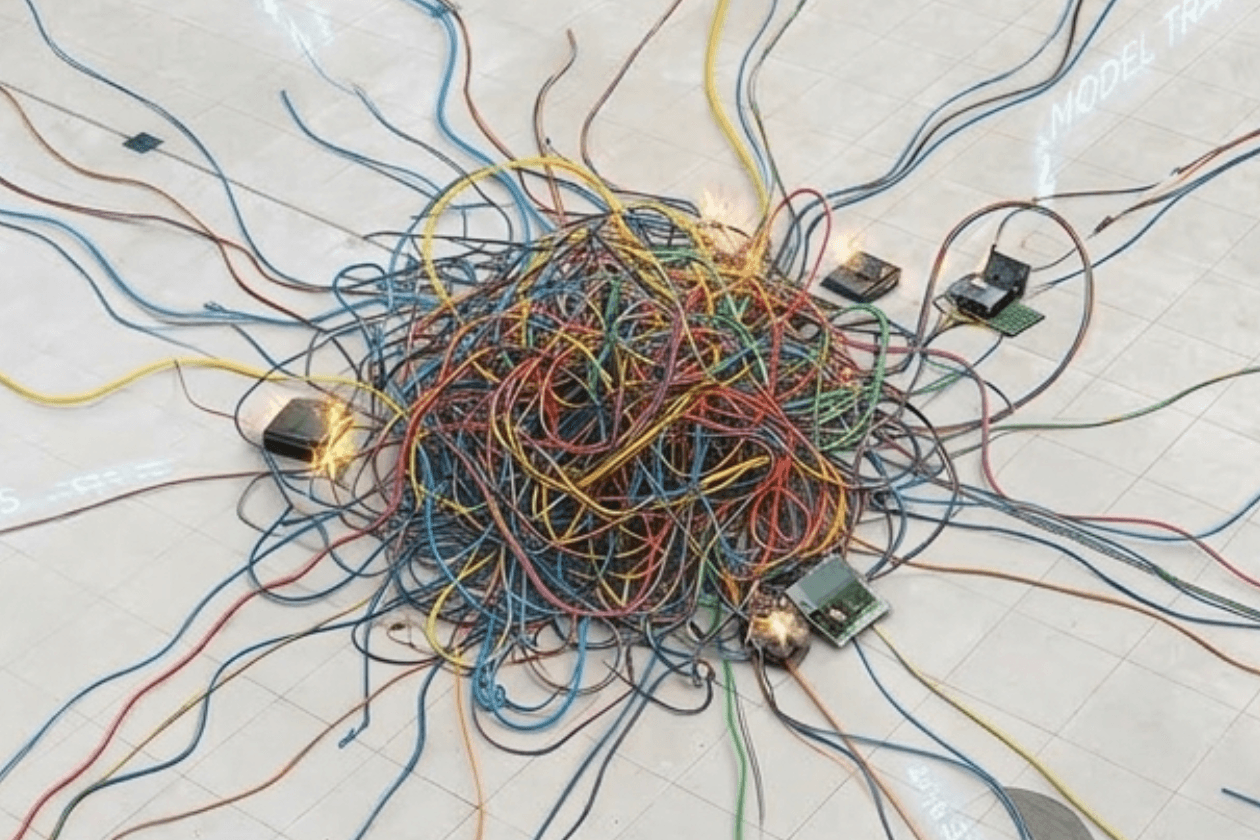

This is the emerging reality of Sprawl AI, the uncontrolled, decentralised and often invisible spread of AI tools and systems across an organisation. It is not innovation. It is fragmentation. And it is rapidly becoming one of the most significant strategic risks facing modern technology leaders.

This analysis explores why Sprawl AI is accelerating, why it is so difficult to detect and what CTOs and CIOs must do before it becomes unmanageable.

The Accidental Architecture Behind Sprawl AI

Most enterprises never intended to create Sprawl AI. It emerged organically, driven by several converging forces that individually seem harmless but collectively create architectural chaos.

The first force is the full democratisation of AI tools. AI is no longer limited to machine‑learning teams. Marketing departments now rely on content‑generation platforms. Sales teams install AI‑enabled CRM plugins. Finance teams experiment with forecasting models. Engineering teams prototype with open‑source LLMs. Product teams integrate AI features directly into applications. Each decision makes sense in isolation, but together they form a patchwork of incompatible systems, which is the defining pattern of Sprawl AI.

The second force is the pace of innovation, which continues to outstrip governance. AI governance frameworks are still maturing, while new models, APIs and platforms appear almost weekly. By the time a governance committee evaluates one tool, several more have already been adopted by teams eager to move quickly.

The third force is the rise of Shadow AI. When official AI capabilities lag behind demand, teams improvise. They use personal accounts, external tools, unreviewed prototypes, and fine‑tuned models without MLOps support. Shadow AI is not malicious; it is a direct response to unmet needs. But it is also one of the primary accelerants of Sprawl AI.

The Illusion of Progress

On the surface, the explosion of AI tools looks like innovation. Leaders see activity, experimentation, and enthusiasm. Dashboards show increased AI usage. Teams report productivity gains. But beneath this apparent progress lies a very different operational reality.

Sprawl AI leads to redundant models and duplicated effort. It is common to find multiple teams building similar classifiers, departments fine‑tuning their own LLMs, parallel data pipelines emerging independently, and repeated integrations with the same external APIs. Every duplicated model increases cost, risk and maintenance overhead.

It also results in inconsistent performance and unpredictable outcomes. Different tools produce different accuracy levels, latency profiles, failure modes and security postures. Achieving enterprise‑wide reliability becomes nearly impossible.

Data governance becomes chaotic as Sprawl AI scatters data across SaaS tools, cloud platforms, local environments, personal accounts and unmanaged storage. Security teams cannot protect what they cannot see.

Costs also spiral quietly. AI costs rarely spike; they accumulate. GPU clusters run longer than expected. SaaS subscriptions multiply. Fine‑tuning jobs consume more compute than planned. Inference workloads grow unpredictably. Sprawl AI hides these costs until they become significant.

Why Sprawl AI Is Accelerating And Not Slowing Down

Many leaders assume Sprawl AI is a temporary phase that will naturally consolidate. In reality, the opposite is happening.

The model landscape is diversifying rapidly. Enterprises are moving from a world dominated by a few major models to one filled with specialised domain models, small task‑specific models, open‑source alternatives and fine‑tuned internal variants. Each new model type introduces new fragmentation.

Vendors are embedding AI everywhere. Nearly every SaaS product now markets itself as “AI‑powered,” which means AI enters the enterprise through dozens of side doors.

Teams are under pressure to deliver AI features quickly. They cannot wait for centralised AI platforms to mature, so they build what they need, when they need it.

The barrier to entry continues to drop. With tools like LangChain, LlamaIndex and low‑code AI builders, anyone can assemble an AI workflow in hours. The easier it becomes to build, the faster Sprawl AI grows.

The Strategic Risks Leaders Can No Longer Ignore

Sprawl AI is not just a technical inconvenience. It is a strategic threat.

The first risk is security exposure. Every unmanaged model, API or tool becomes a potential leak point for sensitive data, proprietary code, customer information or internal documents. Security teams cannot secure what they cannot see.

The second risk is compliance failure. Regulations around AI transparency, data lineage, and model governance are tightening. Sprawl AI makes compliance nearly impossible.

The third risk is operational fragility. When AI systems are inconsistent and uncoordinated, failures cascade unpredictably. A model update can break a downstream workflow. A vendor API change can cause prototypes to fail. A fine‑tuned model can drift without anyone noticing.

The fourth risk is the loss of strategic coherence. AI should be a force multiplier, but Sprawl AI turns it into a collection of isolated experiments.

The fifth risk is rising long‑term cost. Sprawl AI creates duplicated compute, duplicated storage, duplicated engineering effort, and duplicated vendor spend. The cost curve bends upward quietly, then suddenly.

What Leaders Need to Do Now

The solution is not to slow down AI adoption. It is to channel it.

Leaders must begin by building an internal AI platform, even if it starts small. A central platform should provide approved models, offer secure data access, standardise pipelines, support experimentation, and integrate with existing systems. The goal is enablement, not control. (For more information on Fenxlab’s ARC for Enterprise solution, you can click here.)

They must also create a “paved road” for AI development. Teams naturally choose the path of least resistance, so the official path must be the easiest one. This reduces the incentives that fuel Sprawl AI.

Lightweight governance is essential. Governance should guide, not block. It should include model registration, data usage policies, risk classification, monitoring requirements and lifecycle management. Simplicity is key; governance must be easy enough that teams actually follow it.

Consolidation should occur where it matters most. Standardising model hosting, data access, security controls, monitoring and compliance workflows creates a stable foundation while still allowing teams to innovate on top.

Finally, enterprises must treat AI as a product, not a project. AI capabilities require owners, roadmaps, SLAs, documentation, versioning and support. Without product thinking, Sprawl AI is inevitable.

The Future: From Sprawl to Federation

The goal is not to force every team into a single AI stack. That is unrealistic and counterproductive. The future is federated AI, a model in which organisations maintain centralised foundations while enabling decentralised innovation. Federated AI provides shared governance, consistent security, reusable components, and transparent model catalogues. It preserves speed while reducing risk. (Discover more at askarc.app.)

Conclusion: Sprawl AI Is the Quiet Crisis Leaders Can’t Ignore

Sprawl AI does not announce itself. It does not break things immediately. It creeps, one tool at a time, one model at a time, one well‑intentioned experiment at a time. By the time leaders notice, the complexity is entrenched.

The enterprises that win the next decade will not be the ones that adopt AI the fastest. They will be the ones that adopt it coherently. They will be the organisations that turn Sprawl AI into a federated, scalable capability and treat AI not as a collection of tools but as a strategic system.

This is the moment to act, before Sprawl AI becomes a full‑blown operational crisis. To explore the challenges of Sprawl AI in more depth, we encourage you to download our latest Strategic Paper on this topic.